"AI is scaling faster than the systems built to govern it."

H.E. Khalfan Belhoul, CEO, Dubai Future Foundation. Foreword to AI in Motion, IBM Institute for Business Value and Dubai Future Foundation, April 2026.

In our work across the GCC market, the question senior leaders are now bringing to us has shifted from whether their AI investment will work technically to whether the AI it underwrites will still be legal across the jurisdictions they sell into eighteen months from now. AI regulation has moved from academic conversation to commercial constraint, and the next investment is being priced against a different test than the last one.

Two readings most boards bring to that question are wrong. The first is to treat AI regulation as a US-versus-China story; it hasn't been since the EU AI Act entered force in 2024. The second is to read the GCC as a soft-regulation zone. The IAPP's 2025 Global AI Law and Policy Tracker does exactly this, grouping Saudi Arabia and the UAE with Bangladesh, Egypt, Indonesia and five other jurisdictions in a "strategy-only" cluster. That label has been wrong for at least eighteen months, and the cost of believing it is showing up in audit findings.

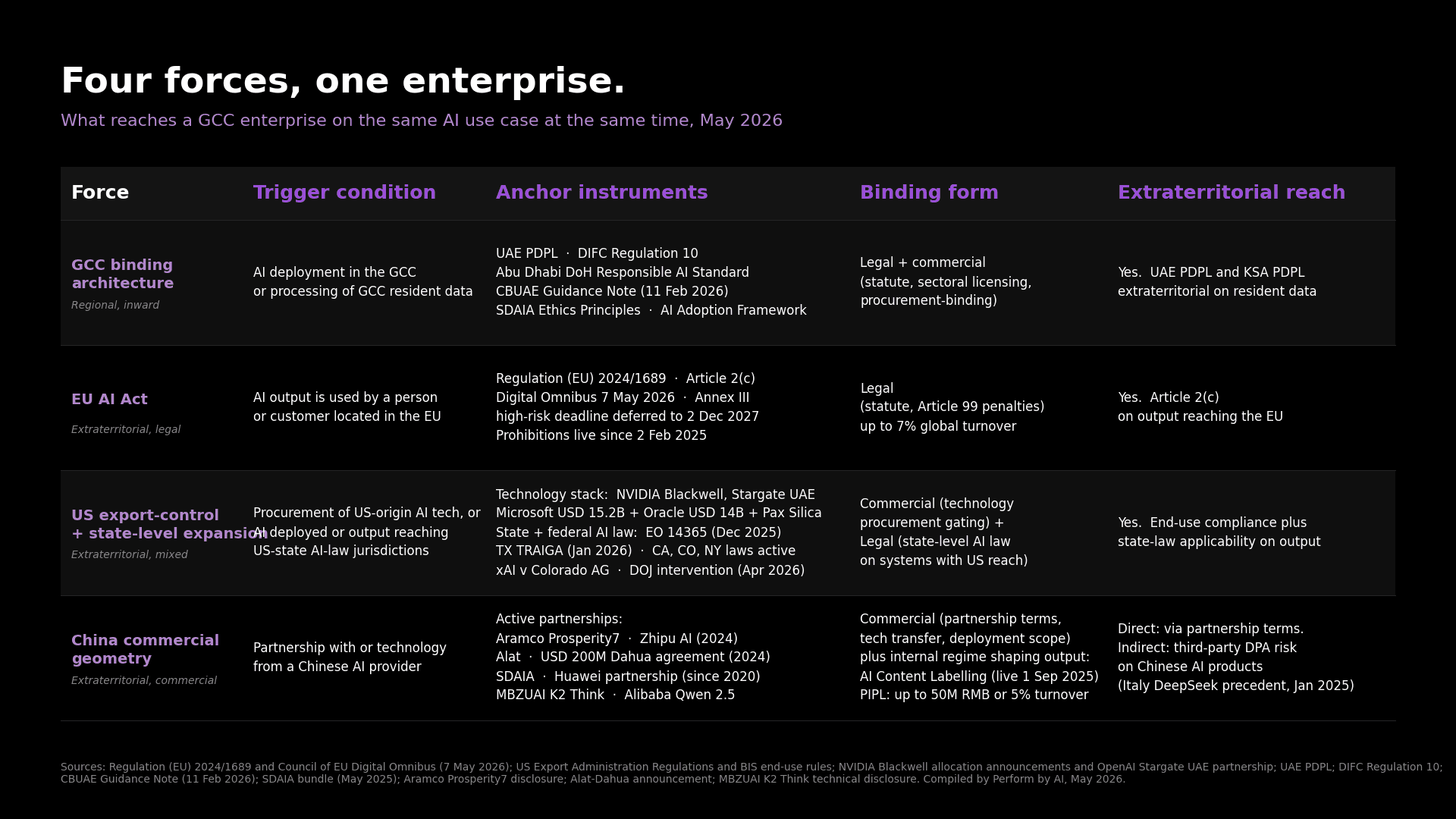

The real reading is that the GCC enterprise sits inside four regulators on the same use case at the same time: its own emerging binding architecture, the EU AI Act's use-case test, US export-control conditionality, and China's commercial geometry under managed pressure. Each binds through a different mechanism, and the major global comparator publications treat them separately. The real exposure surface emerges only when the four are read together.

What is the GCC's actual AI regulatory architecture, and why do the global trackers miss it?

The architecture is what the global trackers miss.

"Around the world, AI policy is no longer just about regulation."

Nestor Maslej, Lead Author, AI Index 2026, Stanford Institute for Human-Centered AI. Chapter 8: Policy and Governance.

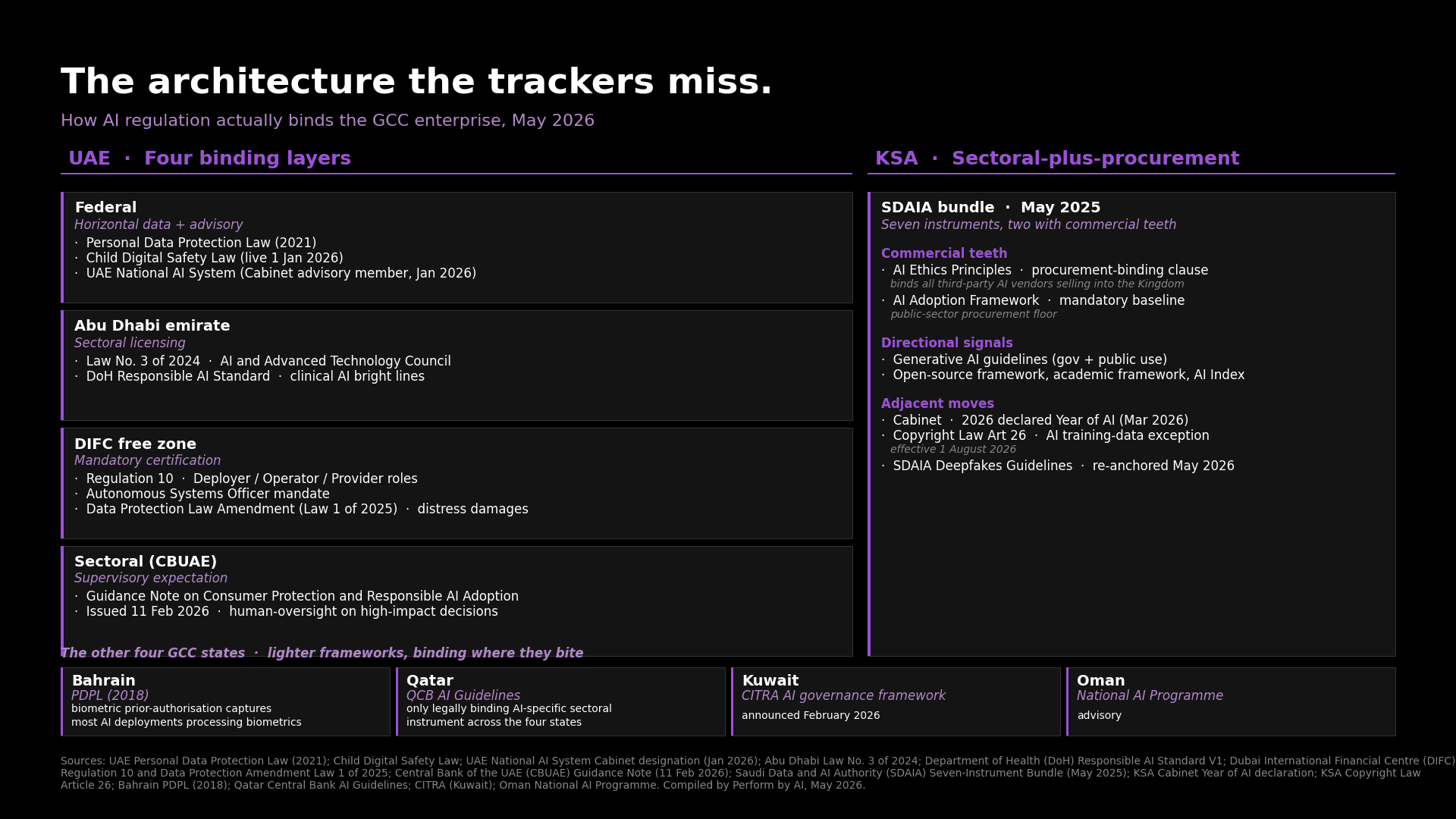

The UAE has not enacted a single federal AI Act. It operates a four-layer regulatory stack, and each layer binds different surfaces. At the federal layer, the Personal Data Protection Law of 2021 binds automated decision-making and cross-border data transfers across the economy. The Child Digital Safety Law took effect on 1 January 2026. The UAE National AI System became operational in January 2026 as an advisory member of the Cabinet, an institutional move no other jurisdiction has made.

At the Abu Dhabi emirate layer, Law No. 3 of 2024 established the Artificial Intelligence and Advanced Technology Council. The Department of Health's Responsible AI Standard sets bright lines for clinical AI: high-residual-risk applications fall outside what licensed entities are permitted to operate, and they answer to the Standard on inspection.

At the DIFC free-zone layer, Regulation 10 is the first horizontal, AI-specific binding instrument in the MEASA region. It introduces a three-role architecture for Deployer, Operator, and Provider. Mandatory certification by accredited bodies and an Autonomous Systems Officer mandate complete the framework. The companion DIFC data protection amendment adds a direct private right of action with distress damages, meaning data subjects can sue without proving economic loss.

At the sectoral layer, the Central Bank of the UAE issued its Guidance Note on Consumer Protection and Responsible Adoption of AI on 11 February 2026. The note's operating teeth are in the human-oversight expectations it sets for high-impact decisions. Fully automated credit or insurance decisions sit outside those expectations, meaning the licensed entity assumes the regulatory risk for any outcome those models produce. In supervisory language, that reads as a finding against you on examination.

Saudi Arabia operates differently. SDAIA bundled seven instruments in May 2025; most are guidance, but two have commercial teeth. The Ethics Principles carry a procurement-binding clause: any third-party AI vendor selling into the Kingdom is contractually held to them. The AI Adoption Framework is a mandatory baseline for public-sector procurement. The other five (generative AI guidelines for government and public use, the open-source framework, the academic framework, the AI Index) carry less commercial weight, but they signal the direction. The Cabinet declared 2026 the Year of AI in March; the new copyright law brings an AI-training-data exception into force on 1 August 2026; the SDAIA Deepfakes Guidelines were re-anchored publicly in May 2026.

The other four GCC states operate lighter frameworks that bind where they bite. Bahrain's 2018 personal data protection law retains a biometric prior-authorisation regime that captures most AI deployments processing biometrics. The Qatar Central Bank's AI Guidelines for licensed financial firms stand as the only legally binding AI-specific sectoral instrument across the four states, and no analog exists in the others. Kuwait's CITRA announced an AI governance framework in February 2026. Oman's National AI Programme remains advisory.

The GCC has more AI regulation in force than the global trackers admit, and almost all of it binds through different mechanisms than the EU AI Act's horizontal approach: procurement-binding in KSA, sectoral-licensing-binding in Abu Dhabi healthcare, mandatory certification in DIFC, supervisory-expectation-binding at CBUAE. All four are operating today. None appear in the "strategy-only" cluster label, and the cost of the misreading is that compliance teams have budgeted for a tracker classification while their regulators are operating to a different standard.

Albous, Al-Jayyousi and Stephens (2025) characterise the regional regime as soft regulation, and that aggregate-level framing is defensible. But "soft" applies to the regulatory register only; inside the registers (DIFC, Abu Dhabi DoH, CBUAE, the KSA procurement clauses), the operational reach is direct. That is the reading boards need.

Exhibit 1. The architecture the trackers miss. UAE's four binding layers, the KSA SDAIA bundle's two commercial-teeth instruments, and the four lighter frameworks across Bahrain, Qatar, Kuwait, and Oman.

Does the EU AI Act reach GCC enterprises selling into Europe?

Most GCC boards still treat the EU AI Act as a Brussels problem that arrives only when they open a European entity. They have it backwards.

The extraterritorial test turns on the use case. A regional bank in Riyadh scoring loan applications for a European customer is inside the AI Act today. So is an insurer in Dubai pricing motor cover for a European policyholder, and an HR-tech firm in Doha filtering candidates for a European employer. None of them needs to open an EU entity to fall inside the regulation. The output reaching the EU person is enough.

The timeline matters as much as the test. Prohibitions on unacceptable-risk AI have been in force since 2 February 2025. The high-risk obligations originally bound on 2 August 2026; the Digital Omnibus trilogue agreement of 7 May 2026 deferred the binding date of Annex III categories to 2 December 2027. The 18-month window in front of the GCC enterprise is the period to put the provider-obligation stack in place: risk management, data governance, technical documentation, record-keeping, transparency, human oversight, accuracy. None of these are optional after December 2027, and Article 99 sets penalties up to 7% of global annual turnover for prohibited-practice violations.

Enforcement is moving even where formal designations have not. Only three of twenty-seven EU member states had named their national AI competent authority by mid-2025. Italy's data protection authority showed in January 2025 that the national-data-protection-authority route works without waiting for full implementation: its order against DeepSeek stood up on existing data-protection grounds, with the AI Act framework not needed.

This is the EU's strategic pattern. The Brussels Effect that GDPR established as "the world's strictest privacy regime now de facto enforces globally" is now operating on AI through Article 2(c). A GCC enterprise that designs the AI investment to satisfy the EU AI Act will, with marginal addition, also satisfy the GCC binding architecture and the China content regime. A GCC enterprise that builds to a lighter floor and tries to retrofit upward will find the cost is not marginal.

What did the US do, and what does it mean for the GCC?

The conventional reading is that Washington deregulated AI in 2025. It is wrong.

Executive Order 14365 of 11 December 2025 framed federal policy: maintain America's competitive lead through deregulatory action at the federal level. But the federal level is not where AI law operates in the US. The federal moratorium that would have preempted state laws was defeated 99-1 in the Senate in July 2025. By April 2026, 150 state AI bills were active across 31 states, and the first commercial-grade laws were live: Texas TRAIGA on 1 January 2026, Colorado AI Act under litigation. New York's RAISE Act for foundation-model developers moved through both chambers in May 2026, with the next phase tightening obligations on systems above the 10^26 FLOP training threshold.

Three federal levers are operating. The first is litigation: a Department of Justice task force standing up to intervene in state AI-law cases. The second is preemption: federal communications and trade regulators framing what state laws cannot do. The third is conditional funding: the $42.45 billion broadband programme runs subgrantee requirements into state contracts, and the federal administrator cautioned state offices in early May 2026 against altering those clauses.

For the GCC enterprise, the practical exposure runs in two directions. First, on the technology stack: NVIDIA Blackwell allocations to G42 and Humain, OpenAI's Stargate UAE partnership, Microsoft's $15.2 billion UAE commitment, Oracle's $14 billion KSA commitment, and the Pax Silica Declaration all sit under US export-control compliance. The April 2025 G42 divestment from ByteDance and Chinese hardware was the operational test of those clauses. Second, on state-level AI law applicability: a GCC firm selling AI services into Texas, California, Colorado, New York, or any of the 31 states with live AI bills is subject to that state's AI law as the AI output reaches the state.

The xAI v Colorado AG litigation from April 2026, with the DOJ intervening, is the federal template for what comes next. The case framing positions sovereign AI policy as a state-versus-federal issue, with sovereign immunity arguments at the heart of the federal response. The outcome will define what state AI laws can and cannot do under US constitutional limits. A GCC firm with US state-level exposure should track this case; the answer here resets US AI regulatory reach.

How does China reach the GCC on AI?

China's reach into the GCC operates on commercial geometry, not legal extraterritoriality.

The active partnerships are the commercial geometry. Aramco's Prosperity7 joined Zhipu AI's funding round in 2024. Alat closed a $200 million Dahua agreement the same year. SDAIA has run a Huawei partnership since 2020. MBZUAI's K2 Think model, the UAE-built model that achieves competitive performance on agentic reasoning benchmarks, is built on Alibaba's Qwen 2.5. No GCC state has banned DeepSeek or comparable Chinese AI products.

The internal regulatory framework China runs at home is dense, but the mechanism is different from both the EU and the US. The content regime sequenced fast: Algorithmic Recommendation Provisions in March 2022, Deep Synthesis Provisions in January 2023, Generative AI Interim Measures in August 2023 (issued jointly by seven agencies), and AI Content Labelling Measures from March 2025 effective 1 September 2025. The Labelling Measures are the first national regime to mandate cross-modal content-provenance labelling across text, image, audio and video, through a dual architecture of user-visible labels and machine-readable metadata.

The adjacent stack is heavier. The Personal Information Protection Law carries penalties of 50 million RMB or 5% of annual turnover, plus criminal liability up to seven years. The Data Security Law and the Cybersecurity Law add further obligations on cross-border data, data classification, and infrastructure-grade security review. Inside China, this stack is operational; outside China, it travels with Chinese AI products as a vendor governance dimension that GCC enterprises now factor into procurement.

The GCC posture is itself a choice. US export-control conditionality has forced specific divestments (G42 from ByteDance and from Chinese hardware), but the broader posture is that the GCC operates with both US and Chinese AI stacks under managed pressure. Italy's order against DeepSeek in January 2025 showed what alternative-extraterritorial reach looks like operationally; similar actions followed across 2025 in Australia, South Korea, Taiwan, Germany, France, the Netherlands, India and US federal departments. Any jurisdiction with a data-protection authority or sectoral regulator can act on Chinese AI providers without waiting for treaty-level reciprocity. The GCC has not joined that pattern, and that absence is a posture choice with cost on both sides.

Exhibit 2. Four reach mechanisms acting on the same GCC enterprise on the same use case at the same time, mapped against trigger, anchor instruments, binding form, and reach.

What changes when all four forces act on the same enterprise at the same time?

The integration is the analysis the comparator publications miss. Stanford HAI's AI Index 2026 (Chapter 8) tracks 59 generative-AI-focused regulations from US federal agencies in 2024 (double the prior year) and 75 AI-mentioning Chinese national policies in 2024. BCG's Refining Oversight 2026 catalogues regulatory bifurcation across regimes. KPMG's UAE AI Charter (with the World Governments Summit, February 2025) tracks regional adoption signals. None of the three reads the four-mechanism integration from the GCC enterprise's perspective. The IAPP 2025 Global AI Law and Policy Tracker, which is the most-cited global tracker, gives the GCC the worst-fitting label currently in circulation.

The integrated reading is concrete. A GCC enterprise running an AI-enabled hiring screen for a multinational client today triggers, on a single use case, four reach mechanisms: the UAE DIFC certification regime (where Regulation 10 binds the screen if the data subjects are in DIFC), the EU AI Act high-risk classification under Annex III (where the output reaches an EU person), US state-level employment-AI law (where the output reaches a US state with employment AI rules, like California's TFAIA), and China commercial geometry (where the underlying model has any China-touching components). The screen is the same screen. The four regimes do not reconcile with each other automatically. Compliance to the strictest applicable jurisdiction is the operational floor.

"This lapse in AI governance is likely to erode confident AI investment, compliance and public trust."

Bruno Sarda Chakraborty, Managing Director, Accenture. Foreword to Advancing Responsible AI Innovation: A Playbook, World Economic Forum and Accenture, 2025.

BCG's Refining Oversight 2026 reports 90% of enterprises now contend with conflicting AI laws across jurisdictions, and the legal-and-compliance risk has shifted from fines to business disruption. The conflict reading is not new, but the operational reading is: broad adoption, narrow value capture, governance as the gating constraint.

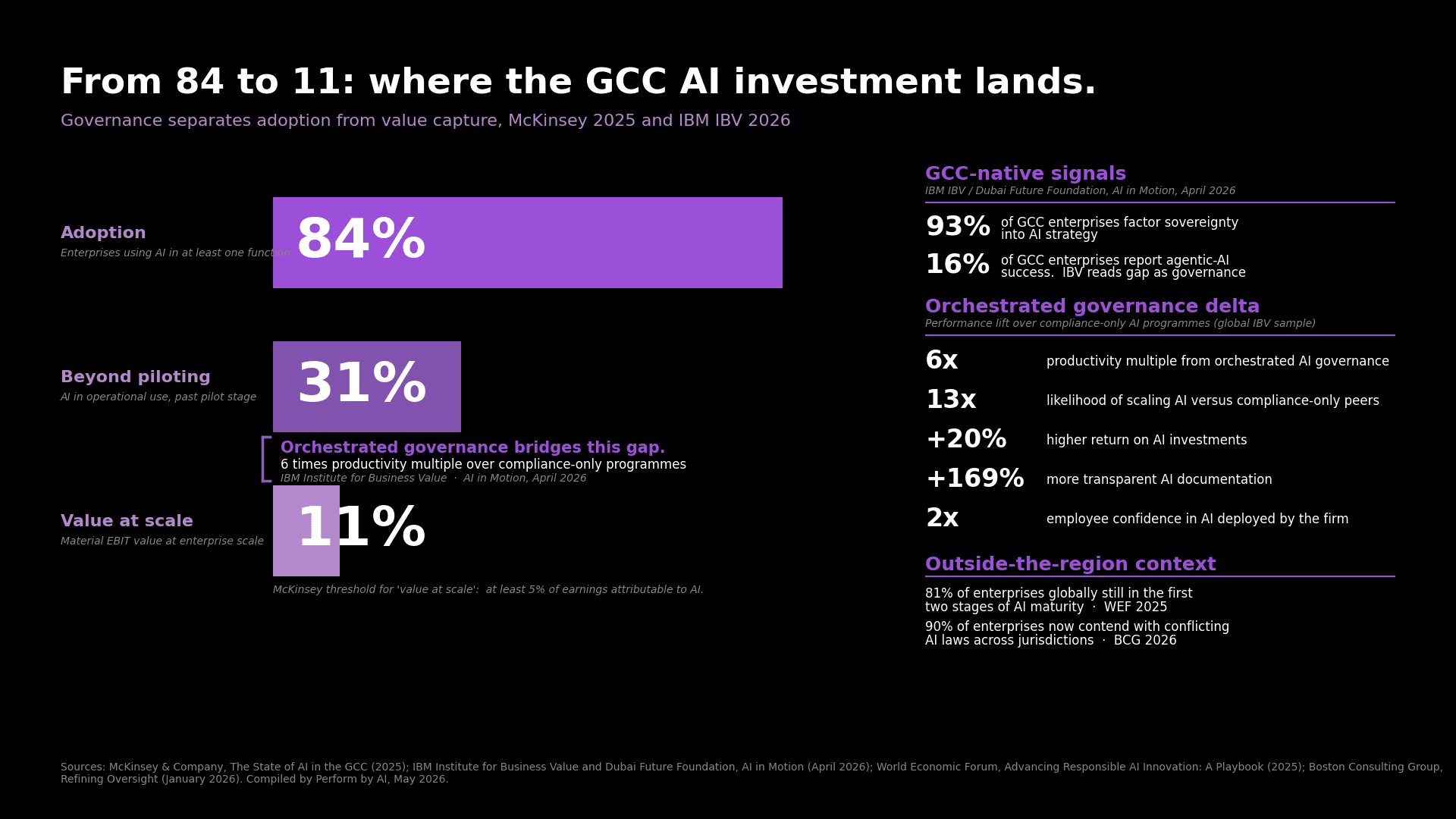

IBM IBV's AI in Motion 2026, co-published with the Dubai Future Foundation, reports 93% of GCC enterprises factoring sovereignty into AI strategy, while 16% report agentic-AI success. The 93% says sovereignty has moved from a negotiable preference to a compliance-binding constraint. The 16% says agentic-AI value capture remains thin, and IBV reads that gap as a governance constraint rather than a technology constraint. The combination is the regional version of BCG's "broad adoption, narrow value" pattern.

How does governance-readiness now price the next AI investment?

The economic stakes are visible in the GCC data. McKinsey's 2025 State of AI in the GCC survey reports 84% adoption (any function), 31% past piloting (operational use), 11% realising value at scale (material EBIT contribution attributable to AI). The 31% to 11% drop is the value-capture gap, and the drop is widening, not closing.

IBV's AI in Motion analysis decomposes the gap. Across the IBV sample, AI programmes that orchestrate governance with deployment achieve a six-times productivity multiple over compliance-only programmes, 13-times scaling likelihood, +20% return on AI investments, +169% more transparent AI documentation, and 2-times higher employee confidence in AI deployed by the firm. The orchestration profile is the operational difference between the 31% and the 11%, and it is sovereignty-design plus governance-discipline plus continuous regulatory tracking, in one operating motion.

This pattern shows up against the global maturity context. The World Economic Forum's Advancing Responsible AI Innovation Playbook (with Accenture, 2025) reports 81% of enterprises globally still in the first two stages of AI maturity (curious or active). The GCC 84% adoption rate sits above the global average, but the value-capture gap is broadly similar. The differentiator at value capture is governance maturity, not adoption velocity.

The position holds against the conventional reading on three counts. The regulatory squeeze is structural and will not dissipate: the phased EU obligations, the EO 14365 implementation, the China labelling cadence, and the 99-1 Senate vote are the regime in operation. Sovereignty has moved from negotiable preference to compliance-binding from day one; the IBV 93% sovereignty-factoring rate and the regional data residency tracking confirm the shift. Adoption velocity alone does not close the gap. Three independent datasets show the same governance bottleneck: McKinsey's 11% realising value at scale, IBV's 16% agentic-AI success rate, WEF's 81% still in early maturity.

Before approving the next AI investment, the board now needs three answers in place: governance documented to a recognised standard, sovereignty built into the design from day one, the strictest applicable jurisdiction set as the operational floor. Across the 11% realising value at scale, those answers preceded deployment.

Exhibit 3. McKinsey's GCC adoption-to-value funnel (84% to 31% to 11%) against the IBV orchestrated-governance delta and two GCC-native sovereignty and agentic-AI signals.

Looking ahead

Two predictions follow from the four-mechanism reading.

First: the GCC's binding architecture will tighten before it loosens. The CBUAE examination cycle on AI governance is live now, and findings on examination will surface across the supervised banking sector by end-2026. KSA's copyright law Article 26 takes effect in August 2026, and the Cabinet's Year-of-AI declaration suggests further sectoral instruments through the year. UAE Child Digital Safety full compliance binds in January 2027. The trackers will continue to lag, but the operational reach will widen.

Second: the misclassification cost will become visible. Compliance teams that budgeted against the IAPP "strategy-only" label will find themselves under-resourced for what their regional regulators expect, with the pattern showing up first in financial services examination findings, then in healthcare AI inspection reports, then in DIFC certification refusals. By mid-2027, the cost of reading the GCC as a soft-regulation zone will have priced itself into the audit conversation.

The strongest enterprises through this period will treat the strictest applicable jurisdiction as the operational floor, with governance documented to a recognised standard before deployment and sovereignty built into the design from day one. By December 2027, when the EU AI Act's high-risk obligations bind, the firms that built governance into the AI investment will be the firms still in the European procurement conversation. The firms that read the trackers and deferred the work will be the firms explaining why they cannot. The misclassification has eighteen months left to price itself in.